face tracking has arrived to the full version + more! (the return of the dev log)

hi! we're working on veadotube. it's been a while since the last devlog as we decided we would only be posting new ones when we had bigger updates to post. well the time has come!

veadotube full version has body and face tracking nodes now! it's actually been like that for about a month, but we just released a build fixing lots of bugs around it and also released a new video tutorial for early access members on how to build a tracking pipeline using said nodes.

to introduce tracking, we made a more traditional-looking-but-not-really default avatar for the full version of veadotube. introducing Tube-tan, the human version of Tube the deer:

they're a good starting point to test the new tracking nodes, but they can also be used with simpler nodes like cursor movement and whatnot!

to do body and face tracking, we got a bunch of different solutions -- we got two new nodes that extract body keypoints from a video feed (yes you can track your feet!!!), and we also have native support for OpenSeeFace, the face tracking library made for VSeeFace.

we have also readded support for the Kinect, both v1 and v2, so if you have a spare one lying around you can actually use it with veado now!

all these solutions are standardised inside of veadotube as Point Groups, so no matter which solution you use, you can use the same processing nodes for gaze detection, head rotation, so on.

here's me going through the horrors of being scanned and tracked (and you can too!!)

on top of face tracking, we have introduced new tools to do lipsync stuff -- anything that does lipsync detection is now standardised into a structure we called Mouth Shape. it's literally a mouth shape:

you can then use the values from a mouth shape to control different states -- values such as the mouth size, how much teeth is present, so on:

you can currently use the Oculus Lip Sync node to do that (which should now finally work on M-series macOS machines as well!), or extract from the face tracking, or combine both solutions to get the best of both worlds. we also introduced Cherry Lip Sync as a new node for lipsync stuff :]

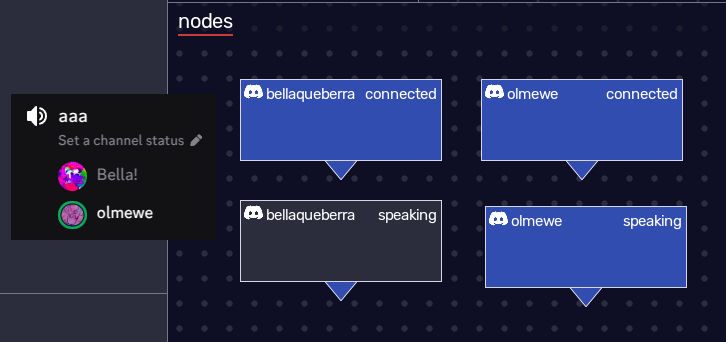

and as a bonus: we finally got Discord working with this thing oh god their API sucks never ask me anything ever again

so that's a little bit of everything for everybody i think!

we're now working on even more bug fixes and adding more integrations as we go (we have not forgotten about Wiimote support!!). there isn't much left for a final release other than making things Stable and Pretty and Localised and keep adding more useful nodes, so that's the direction we're headed! yipee <3

in the meanwhile, we'll keep updating the early access builds, and if you're interested you can get access to them through my ko-fi page (e pelo apoia.se pra brasileiros)!

as for mini, we'll possibly be backporting some features at some point (bug fixes, more noise filter options, maybe we can shove in Discord support somehow). obviously, we're focusing on making the full version of veadotube the actual one with the most prominent features and whatnot. as i always say, mini is mini, we don't wanna bloat it and we think it's a good program as it is, etc etc

as always thank you so so much for the support <3 we're really happy to be able to deliver the veadotube we always wanted to make, with all the features and whatnot, and you guys make it possible through your continued support. see you soon!!

Get veadotube mini

veadotube mini

the lightweight, easy-to-use pngtuber app

More posts

- 2.1c: platform updateJun 09, 2025

- i almost forgot to post a devlog (june 2025 edition)Jun 01, 2025

- may devlog + the Platform Interest FundraiserMay 01, 2025

- the april twenty twenty-five devlogApr 01, 2025

- it's march (the devlog)Mar 01, 2025

- 2.1b: engine updateFeb 21, 2025

- the second february devlogFeb 01, 2025

- 2.1a: bug squashingDec 09, 2024

- 2.1: stability, usability, anniversaryDec 04, 2024

Comments

Log in with itch.io to leave a comment.

Wow! So much going on! Great progress, and I'm looking forward to purchasing the full version!

YOOOO, this is AWESOOOOME!!